The Managers' Guide № 137

Weekly, hand-picked engineering leadership nuggets of wisdom

The best part of having a doctorate is any time someone asks me to do something I don’t want to do, I write “absolutely not” on a post it and say sorry can’t I have a doctor’s note

Dr. Amy

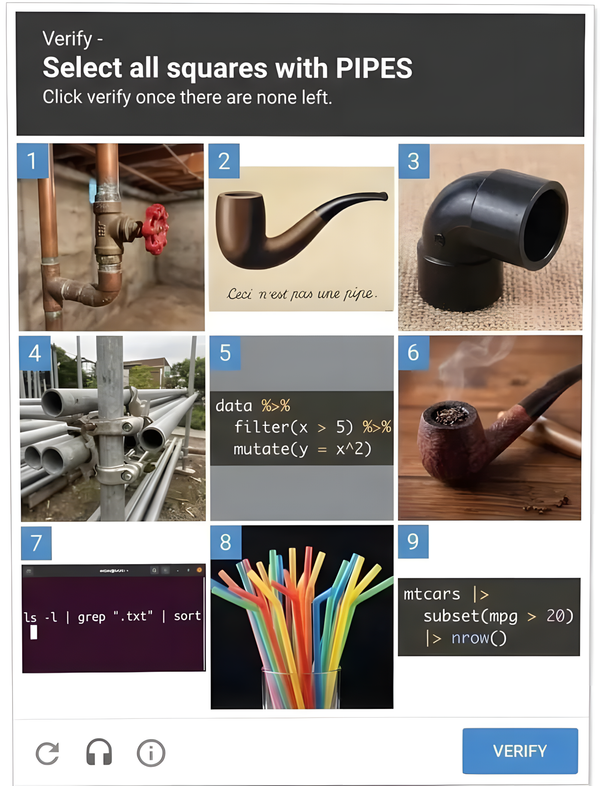

Why Estimates Fail (And Why You Still Need Them)

- 🌡️ #NoEstimates fixed the wrong thing: The movement was reacting to a real pathology — estimates being weaponized as performance targets — but the fix is like smashing a broken thermometer and declaring temperature doesn't exist. The thermometer was the problem, not the concept of heat.

- 🤝 Estimates aren't for the team — they're for everyone around it: External commitments, inter-team dependencies, and ROI trade-offs all require some forecast. Refusing to estimate doesn't solve coordination problems; it just opts out of the game and leaves downstream humans to guess.

- 🧩 Task estimation lives in Cynefin's Complex domain, not Complicated: Joseph Pelrine's research with 300+ agile practitioners consistently landed estimation in Complex — meaning cause and effect are only knowable in retrospect. Even great teams produce only mediocre estimates, because the ceiling on accuracy is a property of the domain, not team maturity.

- 📉 The Cone of Uncertainty is tragically ironic: Estimates become accurate right when you no longer need them. Organizations make their firmest commitments at the concept stage — exactly when uncertainty is widest (up to 16x) — and the cone only narrows through learning (scope decisions, spikes, actual code), not through more meetings.

- 🔢 Fibonacci's non-linearity is doing epistemic work: A 13-point story isn't saying “this is exactly 13” — it's saying “the uncertainty band here is wider than the estimate itself.” The gaps mirror how complexity actually scales, and anything over 8 is usually a story hiding complexity that needs splitting.

- 💬 The number is a side effect — alignment is the point: When one person says 3 and another says 13, the disagreement is the value. That's where hidden dependencies and technical constraints surface. Cancelling estimation meetings because they're run badly is the wrong fix.

- ⚖️ Separate three things most orgs conflate: An estimate is a probabilistic forecast with assumptions attached; a plan is a commitment to a process (“we'll work two weeks, then re-forecast”); a commitment is a promise with consequences, made rarely and only when the cone has narrowed. When pushed to commit, commit to priorities, not timelines.

- 🛠️ Practical moves that compound: estimate late not early, give ranges not points, make assumptions visible, track accuracy to calibrate (never to punish — that just teaches padding), and never let someone outside the work impose a timeline on those executing it.

- 🎯 The uncomfortable truth for both camps: Estimates are communication, not calculation. Their job is to enable decisions under uncertainty, not to predict the future. The real choice isn't “bad estimates vs. no estimates” — it's between the unconscious low-quality estimates your org will make anyway, and explicit, humble, range-based ones that give people something real to work with.

The green dot trap

- 🟢 The Green Dot Trap — Leaders fall into the pattern of responding immediately to every Slack message because it feels productive and keeps them "visibly available," but this creates more problems than it solves

- ⚡ Urgency Culture Creation — When you respond to everything immediately, you signal that everything is urgent, causing your entire team to live in their notifications and abandon thoughtful responses

- 🤔 The Five Message Layers — Every Slack message operates at one of five levels: thinking out loud, sharing information, proposing a frame, stating a position, or making a decision — but they all look identical in text

- 📝 Writing is Thinking — Slack's text-based format is designed to give you space between reading and responding, but most leaders throw away this advantage by treating it like a walkie-talkie

- 🔇 Signal vs Noise Problem — After sending multiple quick, unclear messages, leaders become "unreadable" and when real crises hit, teams can't distinguish genuine decisions from reflexive responses

- ⏰ The 30-Minute Rule — Build in deliberate response latency — unless something is actively on fire, wait at least 30 minutes to respond thoughtfully rather than reactively

- 🏷️ Label Your Layer — Tag your messages with prefixes like "Thinking out loud:" or "Decision:" to help your team understand what kind of response you're giving and what's expected from them

- 👥 Modeling Behavior — Your team watches how you use Slack more closely than you think — if you're always responding immediately, they'll mirror that frantic energy throughout the organization

Willingness to look stupid is a genuine moat in creative work

- 🧠 Nobel Prize curse — Success creates paralysis: once you win recognition, the pressure to maintain that standard often stops great work from happening, as noted in Richard Hamming's "You and Your Research"

- 👶 Youth advantage isn't intelligence — Young people excel at innovation not because they're smarter, but because nobody expects much from them, so they're free to explore "weird, silly, and seemingly-bad-but-actually-good ideas"

- 🎂 Aadil's Law — The willingness to tolerate stupidity is directly proportional to the quality of ideas you'll eventually produce; breakthrough creativity requires cycling through bad ideas first

- 🪼 Evolution's stupidity strategy — Jellyfish survived 500 million years through evolution's willingness to produce countless failed organisms; breakthrough innovation requires the same tolerance for "failure"

- 😨 Two failure modes — Oversharing leads to being tuned out, but undersharing (fear of looking stupid) leads to bland, safe ideas that never risk or achieve greatness

- 🎯 Reframe the goal — Instead of trying to share something good, just try to share something at all — shift from selection-focused to production-focused creativity

- 🔄 The courage regression — The author's past self was "worse at almost everything" but had more courage to publish imperfect work, leading to occasional breakthroughs through sheer volume

The Courage to Confront: How Real Leaders Balance Candor and Care

- 🐘 Meeting room elephants are culture killers — The real rot in organizations comes from quiet avoidance, not dramatic confrontations. When leaders stay silent about obvious problems, it breeds resentment and erodes trust over time.

- 🎭 False kindness is actually cruel — Avoiding difficult conversations to "protect feelings" just postpones pain and makes it worse. Real kindness means helping people grow, not keeping them comfortable in dysfunction.

- ⚖️ Balance candor with care — "Candor without care is cruel. Care without candor is cowardice." The best leaders deliver honest feedback with respect and dignity, not as a weapon or hidden behind sugar-coating.

- 🔍 Truth-telling deepens relationships — Contrary to fear, honest conversations strengthen bonds when delivered with respect. People can handle hard truths; they can't handle hidden truths or pretense.

- 🧠 Smart leaders often struggle with people — High IQ leaders can become overly reliant on logic, impatient with slower processors, and emotionally underdeveloped. Their intelligence can create blind spots about human dynamics.

- ⚡ Intelligence creates leadership distortions — Brilliant leaders often treat relationships like cognitive systems, underestimate emotions, and develop subtle arrogance that assumes others are "the problem" when they're slower or more emotional.

- 🎯 Impact matters more than intent — Leaders underestimate how their power magnifies everything — a passing comment can ruin someone's weekend, and blunt critiques can stick for months, regardless of good intentions.

- 🔄 Avoidance creates passive cultures — When leaders interrupt, rush ahead, or dismiss concerns, teams learn to defer quickly and avoid ownership. Then leaders complain about passive teams without seeing their role in creating that dynamic.

Stop Thinking of AI as a Coworker. It's an Exoskeleton.

- 🤖 The Wrong AI Metaphor — Companies treating AI as an autonomous "coworker" get disappointed, while those treating it as an amplifier of human capability see transformative results

- 🦾 The Exoskeleton Model — AI should work like physical exoskeletons: Ford's EksoVest reduced injuries by 83%, BMW saw 30-40% reduction in worker effort, and military exoskeletons provide 20:1 strength amplification — all while keeping humans in control

- 🏃 Amplification Over Replacement — Stanford's ankle exoskeleton made running feel like 24.9 miles instead of 26.2 for a marathon — the human still does the work, just more efficiently and sustainably

- 🎯 Micro-Agent Architecture — Break down jobs into 47 discrete tasks rather than entire roles; build focused AI components that do one thing reliably (like automated commit messages) while keeping humans in the decision loop

- 📊 Context is Everything — Autonomous agents fail because they lack implicit human context about company priorities, competitive dynamics, and strategic decisions that "never got written down anywhere"

- 🔗 The Product Graph Solution — Kasava combines automated code analysis with human judgment to create a living representation of what your product actually is, not what marketing says it is

- 💪 Compounding Effects Matter — A 30% reduction in muscle stress doesn't just mean less fatigue — it means fewer injuries, longer careers, and preserved cognitive resources for creative work that actually moves products forward

- 📈 Market Reality Check — The exoskeleton market is growing 20% annually toward $2 billion by 2030, but it's for amplifying human capability, not replacing workers — same pattern will apply to AI

That’s all for this week’s edition

I hope you liked it, and you’ve learned something — if you did, don’t forget to give a thumbs-up, add your thoughts as comments, and share this issue with your friends and network.

See you all next week 👋

Oh, and if someone forwarded this email to you, sign up if you found it useful 👇