The Managers' Guide № 134

Weekly, hand-picked engineering leadership nuggets of wisdom

Remember: The dumbest person you know is being told 'you are absolutely right' by a LLM right now.

David Gerard

Why Estimates Fail (And Why You Still Need Them)

- 🌡️ #NoEstimates was reacting to a real problem — estimates being misused as performance targets — but "don't estimate" is like smashing the thermometer because it gives bad news

- 🏢 Organizations genuinely need estimates for external commitments, inter-team coordination, and ROI trade-offs — the team isn't the only audience

- 🧩 Per Joseph Pelrine's research, software estimation lives in the Complex domain (Cynefin), meaning cause and effect are only knowable in retrospect — even great teams will only ever produce mediocre estimates, and that's a property of the domain, not the team

- 📉 The Cone of Uncertainty means estimates are least reliable exactly when they're most needed — and the cone only narrows through actual learning, not meetings

- 🔢 Fibonacci sizing isn't a quirky ritual — the non-linear gaps encode uncertainty, and the disagreements during estimation are where real alignment happens

- 🪤 The core dysfunction: estimates become commitments through social pressure, not logic — and estimates imposed on teams (rather than owned by them) make this worse

- ✅ The fix is separating three things: estimate (probabilistic range with assumptions), plan (commitment to a process), and commitment (a rare, deliberate promise)

- 📋 Better practices: estimate late, give ranges not points, make assumptions explicit, track accuracy without punishment, and split anything over an 8

AI Is Forcing Us To Write Good Code

- 🤖 AI forces better code practices — Agents struggle with messy codebases and can't clean up their own mistakes like humans, making previously "optional" good practices essential for success

- 📊 100% test coverage is a game-changer — Not about preventing bugs, but ensuring every line of AI-written code is verified with executable examples; creates a simple todo list and eliminates ambiguity about what needs testing

- 📁 File organization becomes an interface — Since AI tools navigate primarily through filesystem structure, thoughtful directory naming and many small, well-scoped files dramatically improve agent performance and context loading

- ⚡ Dev environments must be fast, ephemeral, and concurrent — Agents need quick feedback loops with sub-minute test suites, one-command environment setup, and ability to run multiple isolated environments simultaneously

- 🔧 Strong typing eliminates whole classes of problems — TypeScript with semantic type names (like

UserId,WorkspaceSlug) helps agents understand intent immediately and reduces the search space of possible actions - 🚀 The "tax" of good practices pays dividends — What felt like optional overhead for human developers becomes essential infrastructure for AI agents, creating the codebase teams always hoped for

- 🎯 Remove degrees of freedom from AI — Strict linters, formatters, and automated enforcement of best practices constrain the LLM to only make correct choices, acting as essential guardrails

Dangerous advice for software engineers

- 🔪 Sharp tools vs. dangerous advice — Both can be hugely helpful or harmful depending on competence and judgment; giving wrong person dangerous advice is like giving wrong person production SQL access

- 🎯 Examples of dangerous advice — Make your own decisions about what to work on, deliberately break written company rules sometimes, take strong positions when uncertain, identify as a bit of a "grifter," avoid non-shipping activities

- 💡 Why dangerous advice matters — Strong engineers crave it like sharp tools; most career advice is "fake" written to avoid liability or impress people rather than actually help

- 🤐 Manager limitations — Managers almost never give dangerous advice even when needed because if you follow it wrong, it's much worse for them professionally than for you

- ⚖️ High-risk, high-reward nature — Dangerous advice is disproportionately useful to strong engineers and harmful to weaker ones; requires courage and judgment to follow effectively

- 🏢 Organizations aren't just written rules — Author argues against "Seeing Like A State" mistake of over-prioritizing legibility; all communities have load-bearing illegible components that matter

- 🤔 Target audience consideration — If you don't feel comfortable following dangerous advice, you definitely shouldn't; but if you're already operating this way sometimes, you're probably not making a horrible mistake

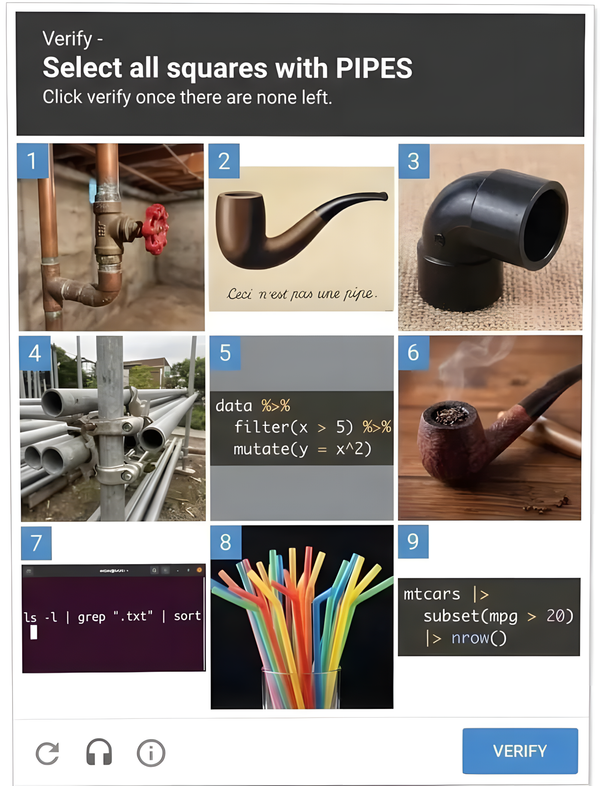

Match The Pipes

An executive skill

- 🍩 Toxic Management Culture — A CEO's donut ritual became a bizarre loyalty test, forcing employees to secretly throw away donuts to avoid Monday morning lectures about "not caring enough"

- 🚰 The Pipe Capacity Principle — Water flow through connected pipes is limited by the narrowest pipe, not the widest — expanding non-bottleneck pipes doesn't increase overall system capacity

- 👔 Executive Trade-off — Executives sacrifice hands-on detailed work for broader influence and greater rewards, but gain unique visibility across entire organizational processes

- 🔍 Finding Bottlenecks — Executives can identify the narrowest "pipe" in Product → Design → Engineering → Operations/Sales/Marketing chains by looking for upstream work piling up

- ⚡ Strategic Interventions — Only executives can reduce upstream output, expand bottleneck capacity, reroute work to non-ideal alternatives, and shift improvement focus when bottlenecks change

- 😡 Pressure vs. Systems Thinking — The author got emotional about "80-hour work weeks" mandates because pressure doesn't increase system capacity — it just stresses people while consequences fall on those least able to push back

- 💔 Human Cost — High-pressure environments cause mental health breakdowns, divorces, family trauma, and even suicide — permanent costs with minimal benefit

- 🛠️ Management Responsibility — Executives have "grave responsibility with life-changing consequences" and need proper management tools from experts like Drucker and Deming, not just pressure tactics

Slow down to speed up

Why AI makes the slow phases of work more important, not less.

- 🧠 System 1 vs System 2 Thinking — AI excels at fast, pattern-matching work (System 1) but human judgment is still needed for deliberate, analytical thinking (System 2) about what to build and why

- ⚡ The Speed Paradox — AI has made the slow phases of work more important, not less, because when execution is cheap and fast, the leverage shifts to the decisions that precede it

- 💸 The Cost of Wrong Decisions — A wrong requirement or flawed design assumption propagates faster through everything AI helps you build, making upfront thinking more valuable than ever

- 🕳️ The Illusion of Speed — AI can help you create technical debt faster by faithfully implementing flawed decisions in thousands of lines of confident-looking code that solves the wrong problem

- 📋 Thinking First Protocol — Spend time clarifying what you actually want before offloading work to AI — write down the problem, success criteria, and constraints first

- 🔍 AI for Deliberation — Use the same tool that accelerates execution to accelerate deliberation through pre-mortems, edge case generation, and requirement interrogation

- 🏔️ Hill Chart Methodology — Map work to "uphill" slow phases (figuring things out, high uncertainty) and "downhill" fast phases (clear path, pure execution)

- 🛡️ Managing Velocity Pressure — Combat "Can't you just use AI?" pressure by being explicit about which phase you're in, timeboxing slow work, and showing your thinking process

- 🎯 Strategic Slowness — Teams that ship fastest long-term are often those who slow down at the right moments — speed and slowness are tools for different phases, not opposites

That’s all for this week’s edition

I hope you liked it, and you’ve learned something — if you did, don’t forget to give a thumbs-up, add your thoughts as comments, and share this issue with your friends and network.

See you all next week 👋

Oh, and if someone forwarded this email to you, sign up if you found it useful 👇